Tech for the Good of Humanity

Branka Marijan is a member of the Campaign to Stop Killer Robots and a Senior Researcher at Project Ploughshares, a Canadian non-governmental organization, where she focuses on security implications of emerging technologies.

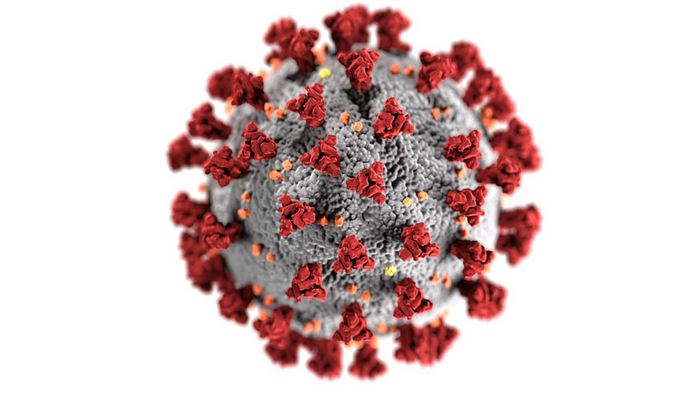

As COVID-19 compels the international community to expand the toolbox at its disposal to more effectively tackle the crisis, artificial intelligence (AI) companies and the broader tech sector are rallying to assist governments and public health authorities. Established companies and start-ups are developing means of early detection, performing digital contact tracing, 3D printing face shields, and even working on better detection of infections in chest x-rays. These incredible responses show that “tech for good,” that is, the creation and use of technology for the betterment of humanity, is more — or at least can be more — than an empty slogan.

At the same time, the COVID-19 crisis is at once expanding and redefining traditional notions of national security and what constitutes effective preparedness. This pandemic has in fact brought into sharper focus the choices that are made about where resources are allocated, which technologies are developed, and for what purposes. These types of choices are and will be particularly important when it comes to applications of AI for national and global security.

Some security and defence applications of AI, which automate different tasks and improve efficiency, are not particularly problematic. However, countries such as the United States, Russia, China, and South Korea are also investing heavily in ever more autonomous weapons systems. For the 2021 fiscal year, the U.S. Pentagon has requested some $1.7 billion for research and development of AI-enabled autonomous technology.

As the Campaign to Stop Killer Robots warns, the concern is that new weapons systems will operate without meaningful human control. Such critical functions as the selection of targets and the employment of lethal force could be carried out by machines with little direction from human operators. In the near future, human involvement could be completely removed — an unprecedented development into unchartered ethical and legal territory.

Yet militaries continue to embrace emerging technologies. In December 2019, the U.S. Defense Advanced Research Projects Agency (DARPA, the Pentagon’s defence research arm) tested a swarm of 250 robots working together to navigate a fake urban environment and assist a small infantry unit. The swarm test, while still controlled by humans, showed the work being done to improve the real-time information gathering and navigation of machines in a complex environment. The US Navy has also tested a network of AI systems where a commanding officer simply gave the order to fire. This level of human control is insufficient even if there is a human operator seemingly overseeing the system.

Other countries are also working on integrating autonomous technologies into military systems. Last year, Turkey even claimed that it had tested an autonomous drone in Syria. While unverified — and likely imprecise — Turkey’s claim highlights the need for closer scrutiny of the way in which new tech is being embraced for security purposes. Russia is working on adapting autonomous vehicle technologies and developing autonomous tanks. Undoubtedly, many more countries are looking at these developments and focusing their research efforts on similar technologies, and considering security and defence applications of existing tech.

The reality of AI technologies, and very much what we are observing during the pandemic response, is that these are multi-use technologies. The same applications that can help us navigate roads can be used to track movements of population. While companies are developing the technology, it is ultimately countries and societies that decide which technologies to invest in and what applications to support. When it comes to applications of AI, developments of fully autonomous weapons stand as a clear example of the type of technology that urgently needs to be prohibited.

This is the time for governments keen to provide their militaries with modern AI-assisted and other state-of-the-art technology to seriously consider the types of capabilities they wish to prioritize to keep their citizens safe — and which boundaries are not to be crossed. And now is the time to prioritize technology that enhances and saves human lives. These choices will be critical to being better prepared to address pandemics and climate change, global threats which can clearly no longer be ignored.

If this article resonates with you, check out the Campaign to Stop Killer Robots website: www.stopkillerrobots.org or join the conversation @bankillerrobots #TeamHuman and #KeepCtrl.